20.10.1 Release is available

VisionLib Release 20.10.1 is now available at visionlib.com.

This is a major update for VisionLib, which adds ARFoundation support, a true highlight for Unity development. Additionally, we’ve updated the VLTrackingConfiguration component, making it now even easier and more consistent for developers to configure tracking setups.

The new Plane Constrained Mode improves performance, if the tracked object is located on a flat surface. For enterprise use, we’ve added support for industrial uEye cameras. Read on.

Watch all Updates in the What's New Video:

ARFoundation Integration:

Use VisionLib's premier Model Tracking together with ARKit & ARCore in one Session

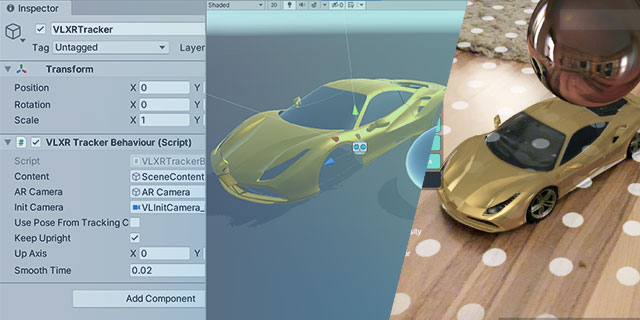

The new release brings ARFoundation support, which enables to get full access to the ARKit / ARCore session while using VisionLib. Developers can now benefit from using our Model Tracking together with highlight functionalities such as: plane detection, light estimation, or environment probes to blend content even more seamlessly with reality.

We’ve added the ARFoundationModelTracking example scene to the Experimental Package, which you can download from the customer area. While ARFoundation integration to VisionLib is beta, we’re happy for feedback that helps to improve its support.

VLTrackingConfiguration Upgrade

Since its introduction in Release 20.3.1, the VLTrackingConfiguration component has eased configuring VisionLib projects, as it introduced a centralized place to reference tracking related information.

Besides useful developer options to autostart tracking or to choose the input source at runtime, we’ve added even more options: now you can set the tracking configuration, the license, and calibration data all in one place. Either by dragging & dropping the assets into the corresponding field, or by using URI strings (with the possibility to use schemes and queries).

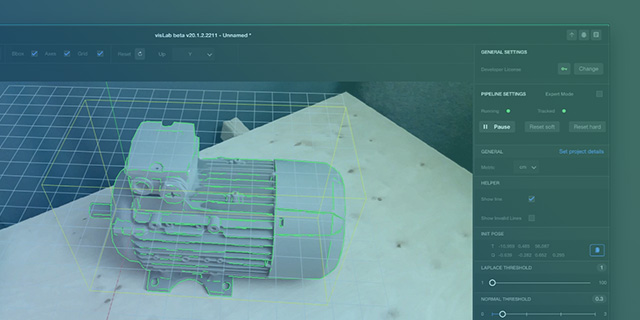

Plane Constrained Mode

With this new feature you can improve performance, when the tracked object is located on a flat surface and while tracking is extended by SLAM. This is especially useful for objects that stand on a ground or table. The tracking will then exclude tilted positions that such objects can’t take. As long as this is enabled, tracking will only try to find poses that align the model’s up vector with the world’s up vector.

To enable/disable the Plane Constrained Mode, we’ve added a checkbox to the AdvancedModelTracking scene, and voice commands to the HoloLens example scenes, for demonstration purposes.

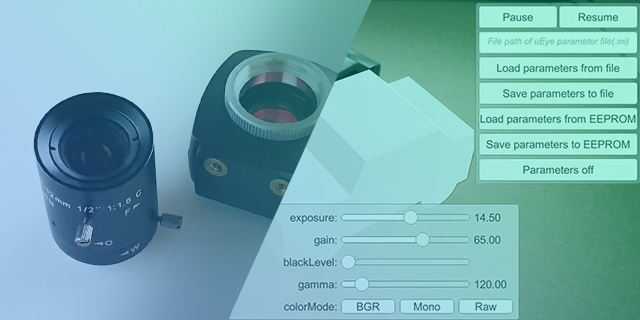

uEye Support – AR with Industrial Cameras

VisionLib ever since has a growing base of industrial users. The new SDK now supports IDS uEye devices as one type of high-end industrial cameras, in order to meet quality and accuracy demands such as in automated manufacturing, inspection, and robotics. Using VisionLib together with uEye may be particularly useful to you if your use case is characterized by some of the following requirements:

- high accuracy demands and the need for high resolution tracking with global shutter images

- more fine-grained control over the image capturing and image generation parameters

- wired connection over large distances (e.g. via Ethernet)

- static camera setup and a moving object

- special lenses for wide or narrow fields of view

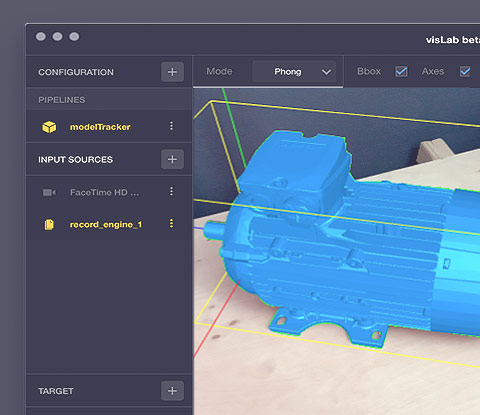

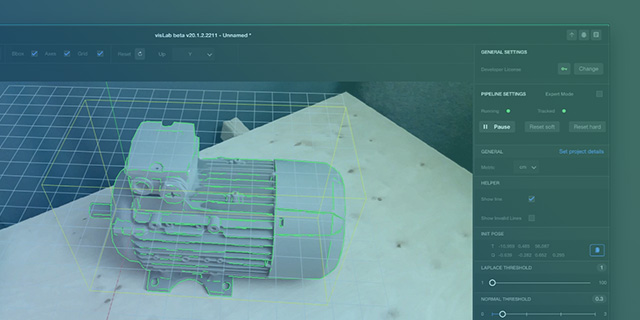

With every new VisionLib release, there is an update to VisLab. Get it now.

General Updates

The new release comes with many more updates and bugfixes. Here an overview on the most relevant ones:

- We added the scheme streaming-assets-dir, which can be used to reference assets relative to Unity’s StreamingAssets folder, usable in Unity as well as in configuration files.

- We cleaned up the use of the VisionLib Schemes and removed the VLWorkerBehaviour.baseDir parameter in the VLCamera and the VLHoloLensTracker. Scheme names can now be case insensitive. For more on this topic, read the file access article.

- VLTrackingAnchors, that are used in multi model tracking, can now be enabled and disabled during runtime.

- We’ve also added a smoothTime parameter to VLTrackingAnchor to smoothly update anchor transforms (i.e. prevent / reduce jumping) – have a look at the ARFoundation example scene to get a demo of its usage.

- Stopping the tracking will now result in a black image instead of a frozen camera image.

- When setting the camera resolution to high on iOS using ARKit SLAM, the display image will no longer be scaled down.

- The tracking will now be restarted when re-enabling the VLTrackingConfiguration on runtime while Auto Start Tracking is set.

Introducing VisionLib Blog

News, Updates & Dev-Talk, All in one Place

We’re proud to introduce our all new Blog pages: Here, we’ll inform about minor & major updates and let you keep track on upcoming & past event – such as our joint talk with SAP on Object Tracking, Smart Factories & the XR Cloud, from VR/AR Global summit in September.

Stay Updated on Social Media – follow us on LinkedIn, Twitter, and have a look on our YouTube channel: We share insights into current developments, present upcoming features, and share exciting work, that partners & customers have created using VisionLib.

Stay healthy and stay tuned.

Have you seen our latest #tech videos? See how VisionLib enables to track & check pieces while working.

Learn VisionLib – Workflow, FAQ & Support

We help you apply Augmented- and Mixed Reality in your project, on your platform, or within enterprise solutions. Our online documentation and Video Tutorials grow continuously and deliver compresensive insights into VisionLib development.

Check out these new articles: Whether you’re a pro or a starter, our new documentation articles on understanding tracking and debugging options will help you get a clearer view on VisionLib and Model Tracking.

For troubleshooting, lookup the FAQ or write us a message at .