Introducing A New Workflow in Unity: Integrating Model Tracking Has Never Been Easier

The latest version 3.0 provides you with an even more powerful and user-friendly solution: you can now integrate object tracking into your own AR/XR platforms even faster and more efficiently.

The update is rounded off by an extensive optimization of the algorithms with regard to stability, memory consumption and runtime performance.

Update to VisionLib 3.0 today at no additional costs. You can use the updated version with current licenses.

Live Webinar

Introducing VisionLib 3.0

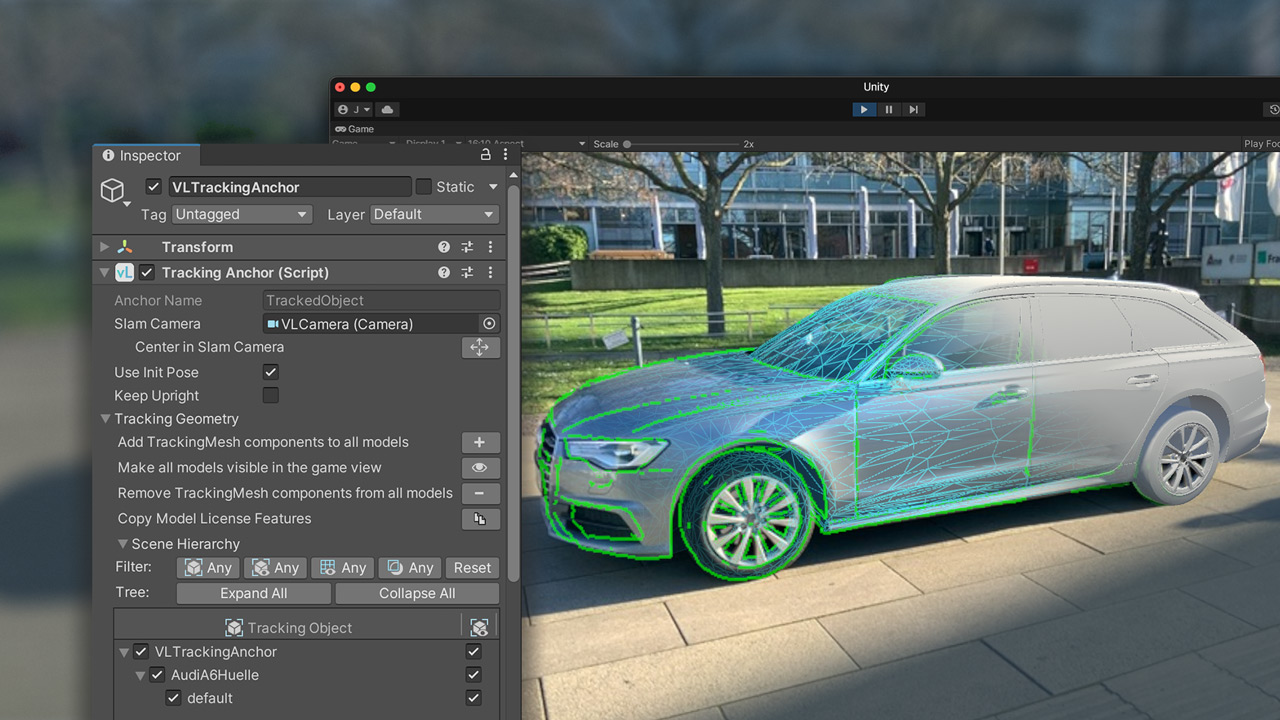

New Scene Setup

The new version allows you to set up the whole tracking in Unity – you don’t need to use an external editor and manually modify .vl configuration files anymore.

New shortcut functions and buttons help simplify the workflow even further.

Models are now always loaded from Unity to VisionLib. This way, every model in Unity’s Hierarchy can also be used for tracking.

The result: The development of your AR applications is faster and less error-prone and you get results quickly. Furthermore, it is easier to integrate VisionLib into an already existing AR application.

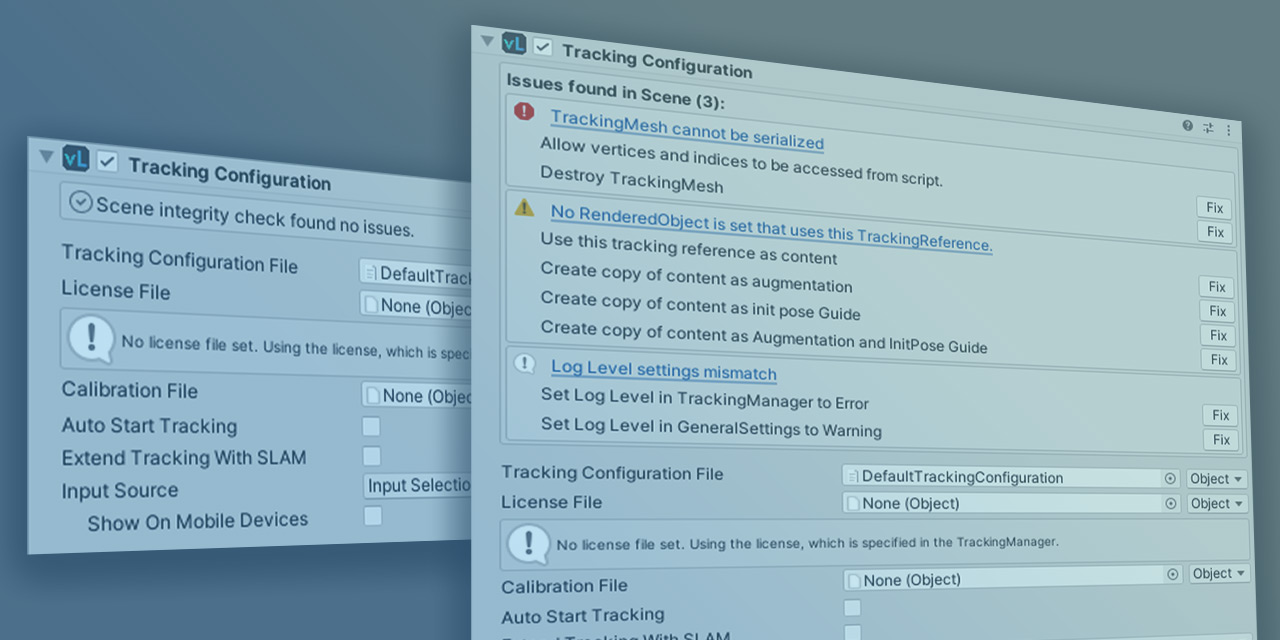

Scene Setup Validation

VisionLib validates tracking set-up information in Unity and suggests solutions to detected problems.

The result: Debugging the tracking gets easier. Unexpected behavior and possible errors resulting from an incorrect scene setup are drastically reduced.

Learn How To Get Started

Use our updated ›QuickStart Guide‹ to get quickly started with the new setup:

If you want to learn about all new components in detail and understand how to setup and use them from scratch, follow this guide:

Transitioning from VisLab to Unity: Already before VisionLib 3.0 we started to transfer many functions and workflows from VisLab to Unity.

During this transition you can already use the ›ModelSetup Scene‹ to debug and optimize your tracking, as well as settings and parameters. Read here, how to do that:

Advanced Compatibility & Flexibility

All features of VisionLib are now compatible with each other and it’s possible to use Multi Model Tracking with Image Injection or Model Injection and with textured models. Multi Model Tracking can therefore be used on all platforms and devices.

The result: The combination of features is now possible without any programming overhead.

Runtime Optimization

Many of the underlined tasks that VisionLib executes have been optimized so that the performance of the SDK has been further improved.

The result: The tracking is smoother and it has become easier to track objects with complex data.

InitPose Guidance

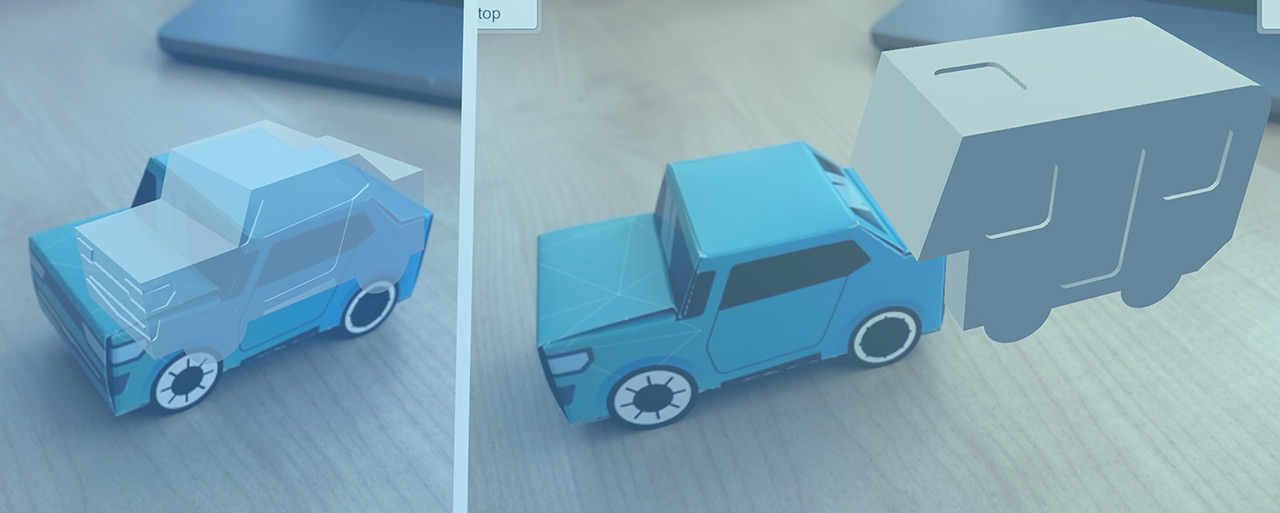

AR apps usually use different 3D models for augmentation, than for tracking. However, sometimes you want to present the tracked model or some other guidance in advance while VisionLib searches for the physical object in the video stream during initialisation.

Setting this “Init Pose Guidance” and controlling what’s used for tracking and augmentation has now been centralised and made easier to use.

The result: There is now an explicit shortcut for controlling this in the TrackingAnchor component.

Init Pose Interaction

With the new 3.0 Version we added the possibility to adjust the InitPose during runtime via user input.

The result: If desired, the user can modify the initial pose without the need to move the mobile device around the physical object to match her/his view of the real object to the predefined initial pose.

VisionLib on Magic Leap 2

VisionLib can now be used with both Magic Leap 2 XR glasses and HoloLens.

The result: You can use VisionLib and its model tracking on two of the most powerful XR devices in the industry.

You are currently viewing a placeholder content from YouTube. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

More Information